A thoughtful exploration of how AI can support social care, reduce loneliness, and reflect human values through intentional, community-led technology design.

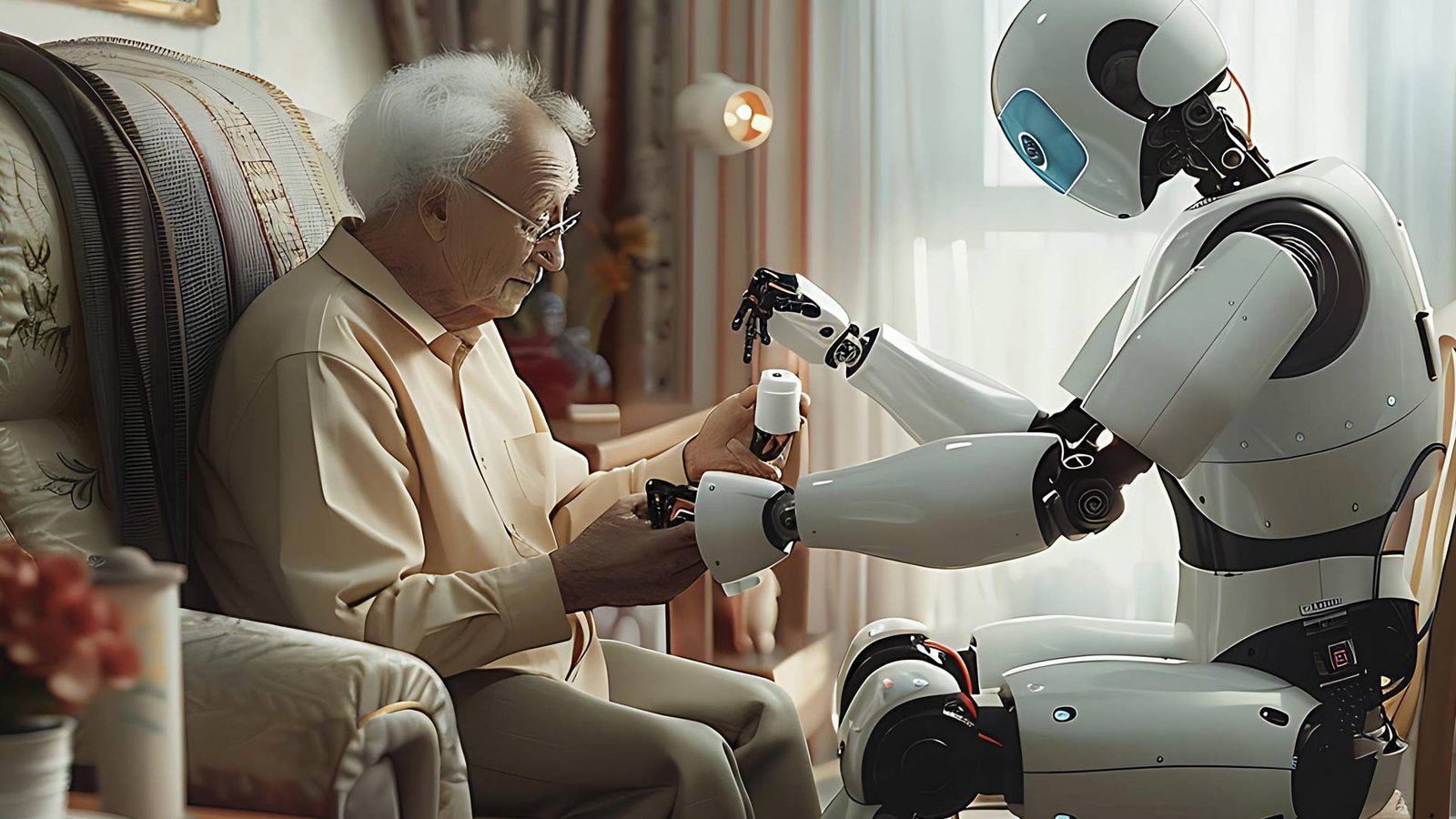

Artificial intelligence has often been viewed as cold, mechanical, and indifferent to the human world it increasingly shapes. Is that a fair assessment? Maybe, but when it comes to social good, it has the potential to become a real pillar when trained and deployed wisely That said, a healthy dose of scepticism is wise, especially when considering how AI might come to understand and genuinely serve human needs. If we apply it in the context of social care, AI’s role can be more than just an automated, data-driven assistant; instead, it can be a powerful companion on hand to ease loneliness and act as a bridge for human connection, particularly as older people become less mobile and sociable.

This is where thoughtful technology can truly shine, and in the article, I will explore whether AI can learn from human behaviour and respond with genuine empathy, rather than cold automation.

What It Means for AI to “Care”

Let’s be clear: AI can’t feel. It doesn’t miss its grandma or worry about the neighbours. But that doesn’t mean it can’t help us respond to loneliness with more sensitivity. Teaching AI to care isn’t about building machines that love us; it’s about designing technology that notices, listens, and supports with specifically trained empathy that is unobtrusive or patronising. Care has always embodied mutual respect, and that respect often deepens to friendship. Does that suggest AI can be both respectful and even develop friendships with those being cared for? It means AI that pays attention to someone’s preferences, learns from their routines, and helps them stay connected to others. And most importantly, it means involving people in the design of these tools, not just handing them tech and hoping it sticks. There is a counterintuitive counter argument to consider of course. Whilst we might train and develop AI products that do empathetically care how anthropomorphic or human like do we want them to be? Is it sensible or even good practice to retain a slightly robotic voice and persona simply because we should never want or allow these solutions to replace real human contact and interaction. Augmentation is a worthy goal; we will never have sufficient carers or care hours to meet the needs of those in social care but using trained, empathetic, idiomatic, secure AI solutions could well go a very long way to meeting that ever-growing need.

Social Impact Is a Design Choice

I know from experience that thoughtful technology doesn’t emerge from good intentions alone. It comes from deliberate design choices that place social goals at the centre. For example, in the Netherlands, the Tovertafel was created to innovate the care sector with an interactive game system. Since being introduced over 10 years ago, it has become an industry standard and enhanced the quality of life across communities around the world. Thoughtful technology is making an impact; we just need to be wise with its uses and keep humans at the forefront of every implementation. Interactive games like those Tovertafel provides can work wonders when people engage with it correctly. With careful consideration and regular virtual and in person check-ins with the user, technology could safely be rolled out and become a normal component in elderly patient care.

Community-Led Development Works Better

Thoughtful technology can either empower or exclude, which is why it’s imperative for its purpose to be established before being rolled out.

Many of the most promising examples of socially responsible AI come from communities working closely with technologists, not being treated as passive recipients of innovation. In Barcelona, city officials worked with residents to build a data commons, where citizens can control how their data is used and help decide which AI projects are pursued.

These initiatives succeed because they start with the social issue, not the shiny tool. They ask: what problem are we trying to solve, and who does it affect most? From there, they build systems that are transparent, accountable, and grounded in the realities of people’s lives.

Regulation Is Necessary, But Not Sufficient

Of course, not every AI decision can or should be left to well-meaning developers. Clear rules are essential to prevent harm. Governments and regulatory bodies have started to catch up with the EU’s AI Act as an example, includes explicit prohibitions on systems that pose unacceptable risks to rights and safety, such as AI-based manipulation and deception, and rightly so. Regulation often lags behind innovation with any technology moving as quickly and widely as AI is presently with the inherent risk By the time a law is passed, the tech may have already been deployed. That’s why a culture of responsibility is just as important as external oversight. Developers should be trained to think ethically, not just technically. Companies need incentives to prioritise long-term social value, not just quarterly profits. The public needs better tools to understand how AI systems affect their lives and how to challenge them when they don’t work.

So, Can AI Care?

Ultimately, if AI is used responsibly and its users are taught how to work with it, it can be a great social tool to help combat loneliness. AI most likely can’t feel empathy, but it can imitate human emotions and apply them to the necessary scenarios

The central question — can we teach AI to care — isn’t really about giving machines emotions but about embedding human values and social priorities into how they’re designed and developed. We’re not trying to make AI feel empathy. We’re trying to make it act in ways that reflect values we care about: equity, fairness, and dignity. That’s both more realistic and more urgent, whilst simultaneously bridging the gap between those desperate for companionship and the dearth of human carers able to totally meet that very human emotional need

AI can’t “care” in a moral or emotional sense. But we can, and must, build systems that reflect care; that means making thoughtful choices about goals, data, and especially governance. It means elevating social needs above technical convenience but never at the expense of expedience. Ultimately, it’s not about whether machines can be compassionate; we already have early proven solutions that continue to evolve in depth and capability. It’s about whether we can be compassionate in how we design and deploy them. That’s a challenge worth taking seriously, not because AI will ever love us back, but because we owe it to each other to shape the future with empathetic intention using technology to help fill the companionship voids in each other’s lives.

Explore AITechPark for the latest advancements in AI, IOT, Cybersecurity, AITech News, and insightful updates from industry experts!